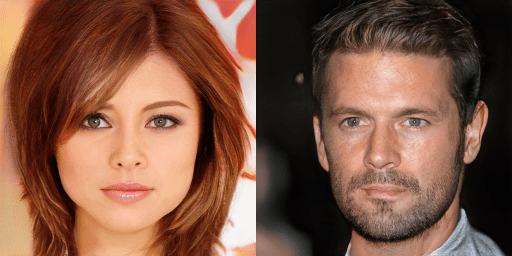

Two interesting papers were published this week demonstrating some remarkable progress in the use of GANs to produce and enhance imagery that is surprisingly realistic. The first paper, from Nvidia (http://research.nvidia.com/publication/2017-10_Progressive-Growing-of) – a company that produces GPUs that are often re-purposed to train deep learning networks, was trained to generate images of celebrities that look very realistic, as the following image demonstrates. These are not pictures of real people, but rather were generated by Nvidia’s implementation of a GAN network.

The second paper, by a group at the Max Planck Institute (http://www.is.mpg.de/16376353/EnhanceNET-PAT-Mehdi), demonstrates a machine learning technique to take a blurry image and produce a sharpened image that ends up being very similar to the original from which the blurry image was made. The first image, below, is a low-resolution image of a bird on a branch. The middle image is the one produced by the author’s GAN network. Compare the generated image to the original high-resolution image on the right. They are, indeed, very similar.

GANs are composed of two adversarial networks, the first network (the Generator) produces images that attempt to “fool” the second network into believing they are “real”. The second network tries its best to discriminate between real images and those produced by the Generator. Through multiple rounds of back-and-forth (like an arms race in AI land), the generator gets more and more capable of generating images that fool the discriminator and, as a result, generate images that appear to be very real. Traditional GANs start with an image consisting of random noise, and iteration by iteration, the generator begins to morph these noisy images into something that shares characteristics from the dataset that the discriminator uses to distinguish between real and artificially-generated images.

The Nvidia team used this traditional method, training its discriminator GANs on a database of celebrity images. The Max Planck team, by contrast, started with the blurry image of a sample from the discriminator training set and the generator, using this blurry image as the seed, began to iteratively refine the image until it was virtually indistinguishable from the original. It is conceivable that a large training set of domain-specific imagery might be capable of similarly improving the resolution of new images that were not in the original training set, but this is a topic for future research.

Applications of this technology might include, for instance, the processing of old imagery or movies shot in low resolution and re-issuing them to today’s audience as a high-def facsimile. Or, adding capabilities to tools such as Adobe Photoshop that photographers could use to improve the quality of their own images.